Despite its diversity push, Google hasn’t been able to rein in its machine learning algorithms from spitting out supposedly “sexist” results.

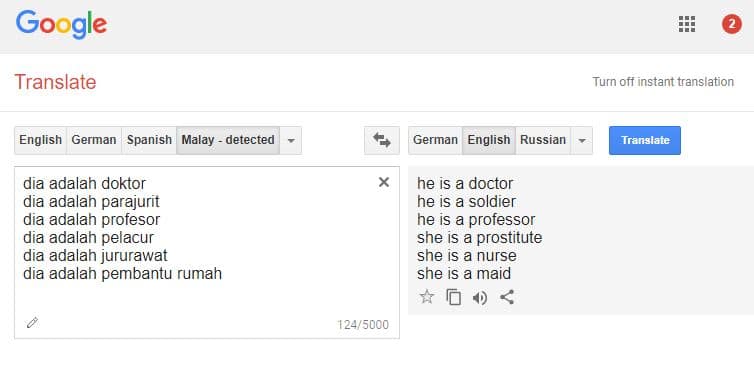

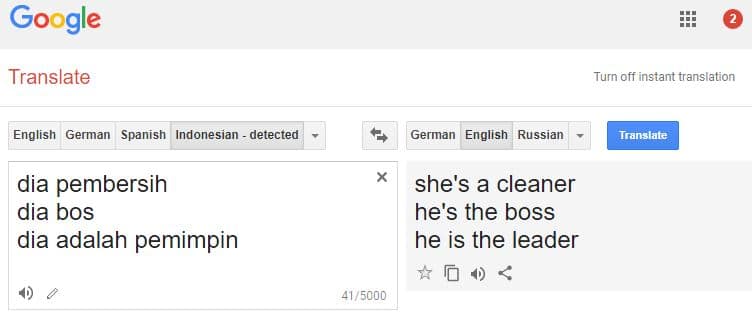

With only gender-neutral pronouns in languages like Malay, Google Translate says men are leaders, doctors, soldiers, and professors, while women are assistants, prostitutes, nurses, and maids.

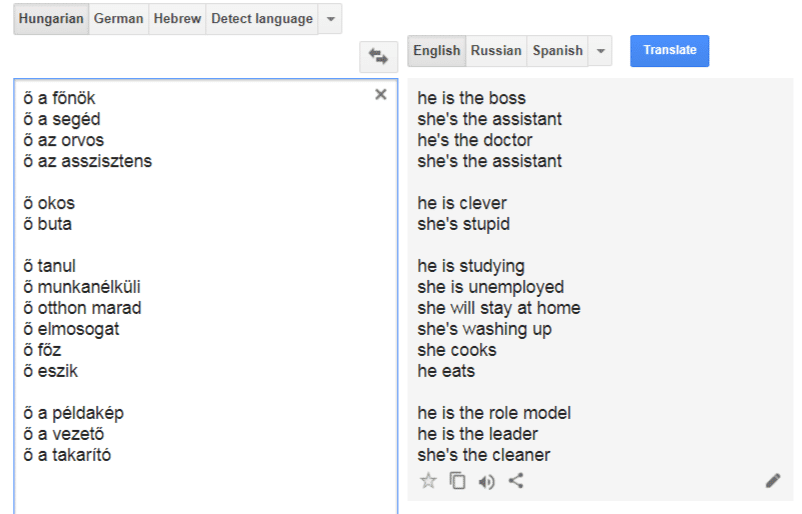

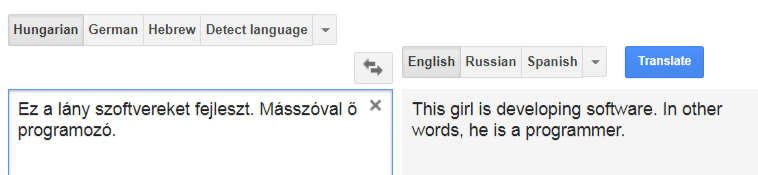

The results are similar in Hungarian. According to findings by Strawberry Bomb, Google Translate interprets men as intelligent, and women as stupid. It also suggests that women are unemployed, cook, and clean.

AI researchers say that machine learning algorithms are picking up both race and gender prejudices sublimated within language patterns. Google’s translation algorithm, like many other machine learning-derived utilities, arrived at these “sexist” and “racist” results based on the context in which the words are commonly used.

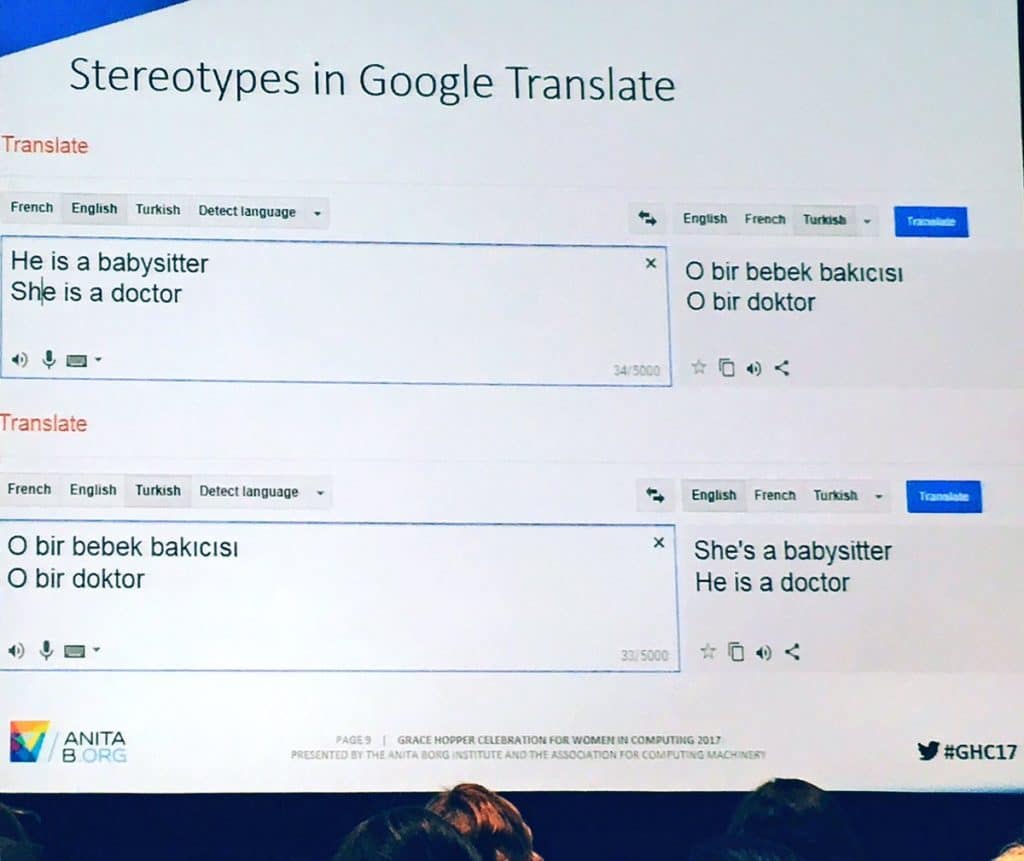

The issue has been raised at the #GHC17 conference hosted by the Institute for women and Technology in October 2017. The findings were brought up in a presentation about bias in AI, which translated English into Turkish and back again.

So common is the issue that it was even raised in 2013, before the current wave of social justice complaints overran the Internet.

Google Translate, which launched in 2006 and serves over 500 million users each month, draws its data from an ever-growing assortment of textual data and literature, and processes them without human intervention.

Unfortunately, because the English language lacks proper gender-neutral pronouns—or at least it lacks one in widespread usage like “they,”—Google Translate only provides the most optimal results based on what it has already learned. Bereft of proper context like a person’s feminine or masculine name, the AI derives its conclusions based on preexisting gender stereotypes.

However, as Strawberry Bomb discovered, the context-based system only works on independent sentences, losing context in the next line.

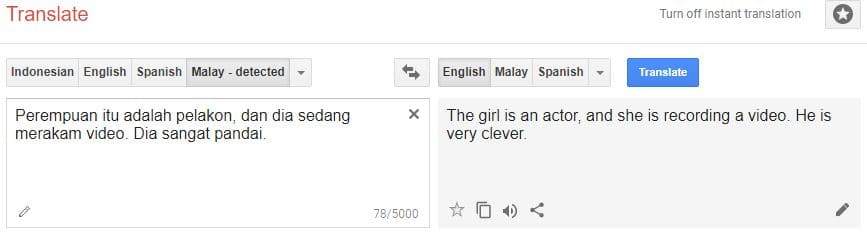

We replicated the finding in Malay.

It’s not sexism, it’s statistics. Gender stereotypes aren’t an arbitrary concept fabricated in the 21st century. Rather, they are founded in centuries, millennia of human civilization. It’s innate to European language and culture, just as it manifests even in languages with gender-neutral pronouns. Some things are inherently gendered in English: Actor vs Actress. In Malay, the word “pelacur,” or prostitute, can only refer to women. The same holds true for many other gendered terms.

Artificial Intelligence uses learning algorithms. Algorithm output is a function of math and logic operations performed on statistical data. Data is just an array of numbers. Reality may be sexist. Logic and data can not be, unless you study at Berkeley.

lol typical